Posts Tagged bsimm

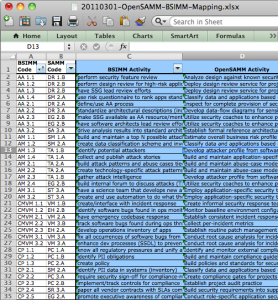

BSIMM activities mapped to SAMM

Posted by Pravir Chandra in Changes, Discussion on March 3rd, 2011

For the impatient, click here to download the mapping spreadsheet. For those still reading… Firstly, many thanks to the OWASP community for hosting the fantastic OWASP Summit 2011 in Lisbon, Portugal a few weeks back. This was a fantastic forum for us to hold OpenSAMM working sessions to discuss experiences and potential improvements to the model. Over the course of the week, we were able to build up a list of additions/changes we’d like to make in the next release, but I’ll cover those in more detail under separate cover.

For the impatient, click here to download the mapping spreadsheet. For those still reading… Firstly, many thanks to the OWASP community for hosting the fantastic OWASP Summit 2011 in Lisbon, Portugal a few weeks back. This was a fantastic forum for us to hold OpenSAMM working sessions to discuss experiences and potential improvements to the model. Over the course of the week, we were able to build up a list of additions/changes we’d like to make in the next release, but I’ll cover those in more detail under separate cover.

The main thing I want to share now is an activity-level mapping of the ~110 BSIMM2 activities to the corresponding 72 activities in SAMM. Obviously, this means that in some cases, more than one BSIMM activity may be mapped to a single SAMM activity. That being said, the overlap spots seem to make sense when we (the ~10 people that worked on it) looked at them in detail. Don’t take our word for it, though, please do review and send any feedback (mailing list or just comment below). And before you ask, yes, you probably will have to go read the respective BSIMM and SAMM activity descriptions in order to see the linkage for some of them (given the occasionally imprecise nature of written language, it’s not always obvious from the activity names alone).

It’s worth noting that we did leave two BSIMM activities unmapped. They are SM 3.2 “run external marketing program” and T 3.3 “host external software security events”. Based on the experience of the working group participants, these activities did not appear to directly improve an organization’s software assurance posture, rather, they appeared to be evidence that the organization was using its (presumably mature) software assurance posture to bolster its public perception or generate additional value in the business. Again, this is totally up for debate if anyone has an argument the other way, so please do share your thoughts.

Last, but certainly not least, I’d like to thank all the people at the Summit for the detailed and thoughtful conversations about using SAMM and about what we can do to make it even better. Specifically, those that contributed and helped review this mapping (in no particular order):

- Colin Watson

- Seba Deleersnyder

- Steven van der Baan

- Bart De Win

- Justin Clarke

- Dan Cornell

- Sherif Koussa

- Brian Chess

Jeremy Epstein on the Value of a Maturity Model

Posted by admin in Discussion on June 18th, 2009

Security maturity models are the newest thing, and also a very old idea with a new name. If you look back 25 years to the dreaded Orange Book (also known as the Trusted Computer System Evaluation Criteria or TCSEC), it included two types of requirements – functional (i.e., features) and assurance. The way Orange Book specified assurance is through techniques like design documentation, use of configuration management, formal modeling, trusted distribution, independent testing, etc. Each of the requirements stepped up as the system moved from the lowest levels of assurance (D) to the highest (A1). Or in other words, to get a more secure system, you need a more mature security development process.

Security maturity models are the newest thing, and also a very old idea with a new name. If you look back 25 years to the dreaded Orange Book (also known as the Trusted Computer System Evaluation Criteria or TCSEC), it included two types of requirements – functional (i.e., features) and assurance. The way Orange Book specified assurance is through techniques like design documentation, use of configuration management, formal modeling, trusted distribution, independent testing, etc. Each of the requirements stepped up as the system moved from the lowest levels of assurance (D) to the highest (A1). Or in other words, to get a more secure system, you need a more mature security development process.

As an example, independent testing was a key part of the requirement set – for class C products (C1 and C2) vendors were explicitly required to provide independent testing by “at least two individuals with bachelor degrees in Computer Science or the equivalent. Team members shall be able to follow test plans prepared by the system developer and suggest additions, shall be familiar with the ‘flaw hypothesis’ or equivalent security testing methodology, and shall have assembly level programming experience. Before testing begins, the team members shall have functional knowledge of, and shall have completed the system developer’s internals course for, the system being evaluated.” [TCSEC section 10.1.1] Further, “The team shall have ‘hands-on’ involvement in an independent run of the tests used by the system developer. The team shall independently design and implement at least five system-specific tests in an attempt to circumvent the security mechanisms of the system. The elapsed time devoted to testing shall be at least one month and need not exceed three months. There shall be no fewer than twenty hands-on hours spent carrying out system developer-defined tests and test team-defined tests.” [TCSEC section 10.1.2] The requirements increase as the level of assurance goes up; class A systems require testing by “at least one individual with a bachelor’s degree in Computer Science or the equivalent and at least two individuals with masters’ degrees in Computer Science or equivalent” [TCSEC section 10.3.1] and the effort invested “shall be at least three months and need not exceed six months. There shall be no fewer than fifty hands-on hours per team member spent carrying out system developer-defined tests and test team-defined tests.”

In the past 25 years since the TCSEC, there have been dozens of efforts to define maturity models to emphasize security. Most (probably all!) of them are based on wishful thinking: if only we’d invest more in various processes, we’d get more secure systems. Unfortunately, with very minor exceptions, the recommendations for how to build more secure software are based on “gut feel” and not any metrics.

In early 2008, I was working for a medium sized software vendor. To try to convince my management that they should invest in software security, I contacted friends and friends-of-friends in a dozen software companies, and asked them what techniques and processes their organizations use to improve the security of their products, and what motivated their organizations to make investments in security. The results of that survey showed that there’s tremendous variation from one organization to another, and that some of the lowest-tech solutions like developer training are believed to be most effective. I say “believed to be” because even now, no one has metrics to measure effectiveness. I didn’t call my results a maturity model, but that’s what I found – organizations with radically different maturity models, frequently driven by a single individual who “sees the light”. [A brief summary of the survey was published as “What Measures do Vendors Use for Software Assurance?” at the Making the Business Case for Software Assurance Workshop, Carnegie Mellon University Software Engineering Institute, September 2008. A more complete version is in preparation.]

So how do security maturity models like OpenSAMM and BSIMM fit into this picture? Both have done a great job cataloging, updating, and organizing many of the “rules of thumb” that have been used over the past few decades for investing in software assurance. By defining a common language to describe the techniques we use, these models will enable us to compare one organization to another, and will help organizations understand areas where they may be more or less advanced than their peers. However, they still won’t tell us which techniques are the most cost effective methods to gain assurance.

Which begs the question – which is the better model? My answer is simple: it doesn’t really matter. Both are good structures for comparing an organization to a benchmark. Neither has metrics to show which techniques are cost effective and which are just things that we hope will have a positive impact. We’re not yet at the point of VHS vs. Betamax or BlueRay vs. HD DVD, and we may never get there. Since these are process standards, not technical standards, moving in the direction of either BSIMM or OpenSAMM will help an organization advance – and waiting for the dust to settle just means it will take longer to catch up with other organizations.

Or in short: don’t let the perfect be the enemy of the good. For software assurance, it’s time to get moving now.

About the Author

Jeremy Epstein is Senior Computer Scientist at SRI International where he’s involved in various types of computer security research. Over 20+ years in the security business, Jeremy has done research in multilevel systems and voting equipment, led security product development teams, has been involved in far too many government certifications, and tried his hand at consulting. He’s published dozens of articles in industry magazines and research conferences. Jeremy earned a B.S. from New Mexico Tech and a M.S. from Purdue University.

OWASP Podcast about SAMM

Posted by Pravir Chandra in Press on March 25th, 2009

I recorded an OWASP Podcast episode with Jim Manico and it just went live. We discuss the new SAMM release, some of the project’s history, and, of course, some other favorite projects of mine. Jim is a great host and I can’t wait to get invited for another!

I recorded an OWASP Podcast episode with Jim Manico and it just went live. We discuss the new SAMM release, some of the project’s history, and, of course, some other favorite projects of mine. Jim is a great host and I can’t wait to get invited for another!

What’s up with the other model?

Posted by Pravir Chandra in Discussion on March 6th, 2009

A day or two back, Cigital and Fortify just released another maturity model named the Building Security In Maturity Model (BSI-MM). I’ve had lots of folks ask me about it and how it’s related to SAMM, so I figured I should write a post about it. The short answer: they’re different (BSIMM forked from the SAMM Beta). The long answer? Keep reading…

So, a long time ago in a galaxy far… ahem… actually, it was last July (2008). Brian Chess and I had a drink at RSA and discussed what I’d be doing with my time now that I’d left Cigital to start independent consulting. I was really focused on using my new found spare time to build the next revision to CLASP. In my vision (which I talked about as early as the OWASP EU conference in Milan in May of 2007), there would be a model that both demonstrated how to logically improve individual security functions over time as well as a collection of prescriptive roadmaps based on the organization type.

Brian and Fortify gave me contract to fund development of what would become the SAMM Beta. Once the Beta was complete last August, Gary McGraw (who sits on Fortify’s Technical Advisory Board) got word of SAMM and wanted to get Cigital involved. We had one meeting for Cigital to provide feedback on SAMM, but it was clear to me that they wanted to take the model in a different direction than I had wanted (lots of reasons here, but one objection I had was use of branding/marketing terminology). So, we forked.

Gary, Brian, and Sammy (and maybe others) massaged the high-level framework from SAMM into what they call their Software Security Framework (SSF). They took this out to 9 big companies with advanced secure development practices to get feedback on what those companies are actually doing. Though I really liked the idea of collecting that data, I wasn’t involved at all. Based on what they learned from SAMM and what they heard from those 9, they created the BSI-MM. So, even though the models may seem similar in structure, they’re different in terms of content.

Just as a disclaimer on the current state of things, I have not worked with the folks at Cigital, but I’m still actively collaborating with folks at Fortify who are supporting both models (and maybe others too!). If folks are interested, I’ll write up more about SAMM vs. BSI-MM once the next release of SAMM comes out next week.